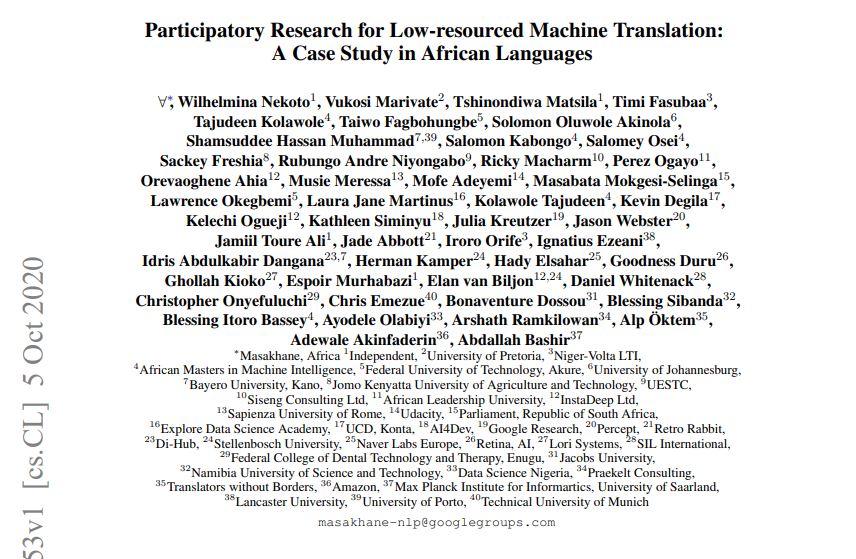

The paper, titled “Participatory Research for Low-resourced Machine Translation: A Case Study in African Languages” boasts 50 authors from across African soil and beyond, including InstaDeep’s Kelechi Ogueji and Orevaoghene Ahia, both based out of our Lagos office. It is accepted to “Findings”, a new initiative being trialled as a sister publication to the EMNLP conference this year. Findings is peer-reviewed and according to the organisers allows for more high-quality papers to be accepted than can be accommodated through the main conference.

Participatory and community research for low-resource languages

The publication focuses on how Masakhane developed Machine Translation (MT) benchmarks for more than 30 different African languages, for many of these languages for the very first time. It argues that low-resource means more for languages beyond data availability, it reflects other systemic problems as well. Hence, the authors propose participatory and community research to solve this and show how a community of African NLP researchers can tackle their local problem despite the many challenges. Part of the work concentrates on developing the benchmarks and evaluating some of the models on three different domains, the JW300 (religious), TED (more general) and Covid (health). Additionally, the participants report the results of these models and suggest areas for future works.

Pidgin and Tiv MT models

The InstaDeep pair’s main contributions are around building Pidgin and Tiv Machine Translation (MT) models, as well as evaluating Pidgin MT translation results on three datasets across the domains mentioned above. It is not the first time Kelechi and Orevaoghene excel in NLP research. Last year, the two AI Research Engineers developed the world’s first NLP Pidgin to English translation model.

EMNLP 2020 runs from Sunday 8th November to Thursday 12th November. The paper is available in full on Arxiv.